GGML and llama.cpp join Hugging Face

Today marks a significant milestone in the world of Local AI inference. GGML/llama.cpp, the popular open-source runtime for efficient LLM inference, has officially joined Hugging Face, the leading platform for AI developers and researchers. This collaboration is set to bring about exciting developments in the Local AI ecosystem, and I wanted to share my thoughts on what this means for developers, maintainers, and users alike.

Update: Please also check out the official announcement from Georgi Gerganov.

My journey

I first came across llama.cpp in early 2023, along with the release of the LLaMA models by Meta. Back then, I was a last-year student at INSA Centre Val de Loire. My main study focused on Cybersecurity and C++. At the time, I didn't have the hardware resources to run large language models, but I was fascinated by the idea of running them locally on consumer hardware. When I discovered llama.cpp, it was a perfect fit. It allowed me to run LLaMA models on my Framework Laptop with decent performance.

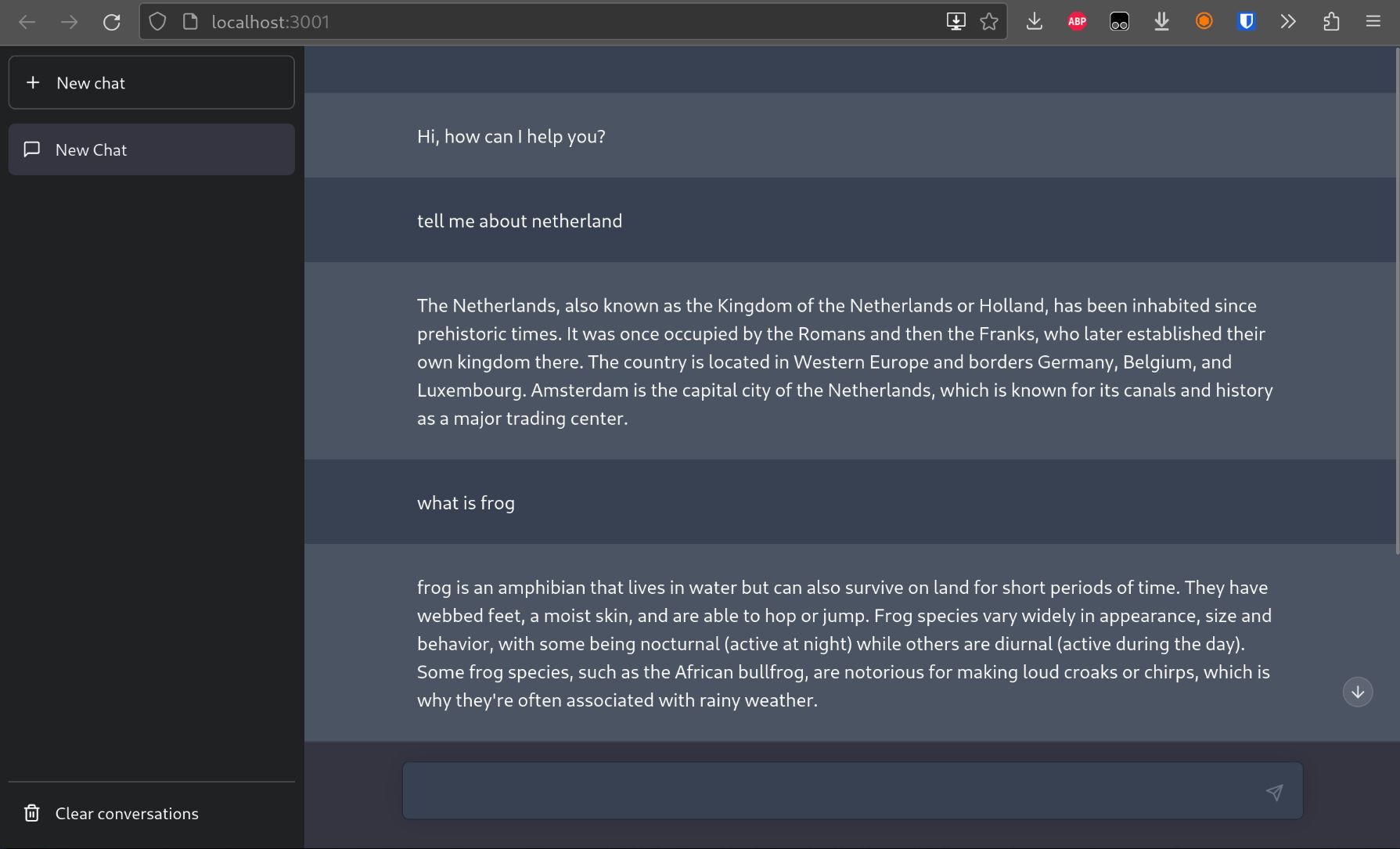

Among my first projects built around llama.cpp, I developed a Web UI for the Alpaca model, which is an instruction-tuned version of LLaMA. This project was a great learning experience and helped me understand more about llama.cpp.

Image: Screenshot of Alpaca Web UI

I started contributing to llama.cpp in late 2023, around the time when llama-server was introduced. The server implementation made it easier to deploy LLM and use it in various applications. At the time, I was also working on a big server project for my apprenticeship at Snowpack, and llama-server was a nice playground for me to bridge my knowledge of C++ and server development.

Time flew by, mid-2024, Julien Chaumond, CTO of Hugging Face, reached out to me about the possibility of joining Hugging Face. It was a difficult decision to make, as my degree was about Cybersecurity, but I was passionate about machine learning since I was in high school. I decided to take the deal and joined Hugging Face in August 2024. Since then, I've been working on various impactful features in llama.cpp, including the overhaul of the multimodal support and the integration of many models, including Gemma 3, LLaMA 4, Mistral Small, GLM-4V, and more.

Things have been going great. And yesterday, when the news about GGML/llama.cpp joining Hugging Face was announced on our Slack channel, I was so happy. It felt like a recognition of the hard work that the community has put into llama.cpp, and it also opened up new opportunities for collaboration and growth.

What does this mean for me?

For me, most will stay the same. The main difference is that the amount of energy needed for communication and coordination will be reduced. It may not sound much, but it will be a huge quality-of-life improvement for me, as I am a very introverted person 😅

For reference, each time there is a new model coming out, the process usually involves setting up all the communication channels, one between the partner and GGML, one between Hugging Face and GGML, and one between the partner and Hugging Face. And before everyone can get to work, there are also NDAs to sign. As you can see, there are a lot of moving parts, and potentially duplicated efforts, miscommunication and delays. With the new arrangement, there will be a single communication channel between Hugging Face and the partner, which will streamline the process and reduce the overhead.

With less communication overhead, I can focus more on the technical work and less on the organizational work. This means that I can spend more time coding, optimizing and testing before new models are released. A win-win for everyone!

What does this mean for llama.cpp?

As mentioned in the official announcement, llama.cpp will continue to be open-source and free to use. The development will continue as usual, and the project will benefit from the resources and support of Hugging Face.

This basically means all the administrative work, such as legal, financial, hiring and marketing, will be handled by Hugging Face, which allows maintainers to focus more on the technical work.

Personally, I look forward to recruiting more talented developers to join the team and contribute to llama.cpp. Last year, we recruited Aleksander Grygier because the Web UI project was getting a bit out of hand for me to manage alone. That was a great decision, as Aleksander has been doing an amazing job maintaining the Web UI and adding new features. The community loves him, and he's a great asset to the project.

With the new arrangement, we can reuse the same hiring process, same policy and same headhunting channels to recruit more talented developers to join the team.

Image: The new llama.cpp's Web UI, maintained by Aleksander Grygier

Inference at the edge

This point of time reminds me of the very inspirational discussion titled "Inference at the edge" that Georgi published way back in 2023. In that discussion, Georgi set out the core philosophy of llama.cpp, which is to be free, open-source, and focused on efficient inference on consumer hardware. This philosophy has guided the development of llama.cpp and has been a key factor in its success.

I believe the "migration" of llama.cpp to Hugging Face is an important step towards this goal. With the "open" mindset of Hugging Face, I believe we can accelerate the growth of llama.cpp, both in terms of technical features and community engagement. This will ultimately benefit the whole landscape of Local AI inference, as more developers and users will have access to powerful tools for running LLMs locally.

Looking forward, I'm excited to be part of this journey. Together, we can continue to push the boundaries of what's possible with consumer hardware. Let's build the future of AI together! 🤗🤗🤗

P/s: Huge kudos to Georgi, Julien, Lysandre, Victor, Anna, Stevie and anyone else who has been involved in making this happen. You guys rock! 🚀